Accelerating the Future: A Guide to AI Infrastructure

I. The Core of High-Performance Inference

Production AI demands infrastructure that can handle thousands of concurrent requests with millisecond latency.

Triton Inference Server

The NVIDIA Triton Inference Server is the gold standard for production AI. Key features include:

Multi-Framework Support: Run PyTorch, TensorFlow, and ONNX models simultaneously on a single server.

Dynamic Batching: This core feature helps Triton by aggregating individual requests to boost throughput. It ensures the GPU remains fully utilized by bundling requests into optimal batch sizes on the fly.

Model Versioning: Allows for seamless updates and live A/B testing without service downtime.

TensorRT Optimization

To achieve the absolute maximum performance, models should be compiled with TensorRT. It optimizes models specifically for high-throughput GPU inference by fusing layers and selecting the best hardware kernels automatically.

II. Orchestration and Automation

Managing a cluster of GPUs manually is prone to error and "configuration drift." Modern DevOps relies on automated operators to maintain consistency.

The NVIDIA GPU Operator

In Kubernetes, the GPU Operator installs GPU drivers and runtimes automatically.

Consistency: To avoid driver mismatches in a cluster, the GPU Operator should always be used to ensure every node is running identical software versions.

Self-Healing: It monitors node health and automatically provisions drivers when new hardware is added to the cluster.

Package Management with Helm

Deploying complex AI stacks like Triton or MLflow is simplified using Helm. You should use Helm for Kubernetes app deployments because it packages all necessary resources (services, deployments, and ingress) into a single, version-controlled unit.

III. Hardware Architecture: DGX & SuperPOD

Building an AI data center requires specialized hardware to prevent data bottlenecks between processors.

DGX Server Architecture

A typical DGX server is a purpose-built AI supercomputer that typically has 4–8 NVLink-connected GPUs.

- The Interconnect: For a full GPU clique interconnect, systems use NVLink with NVSwitch mesh. This allows every GPU in the server to communicate with any other GPU at maximum bandwidth, treating the entire memory pool as one.

Scaling to SuperPOD

When scaling beyond a single server to a cluster, SuperPOD clusters use InfiniBand networking between DGX nodes. InfiniBand provides the ultra-low latency and RDMA capabilities required for massive parallel training across hundreds of GPUs.

IV. Troubleshooting Performance Bottlenecks

Even the best hardware can underperform if the software pipeline is not tuned.

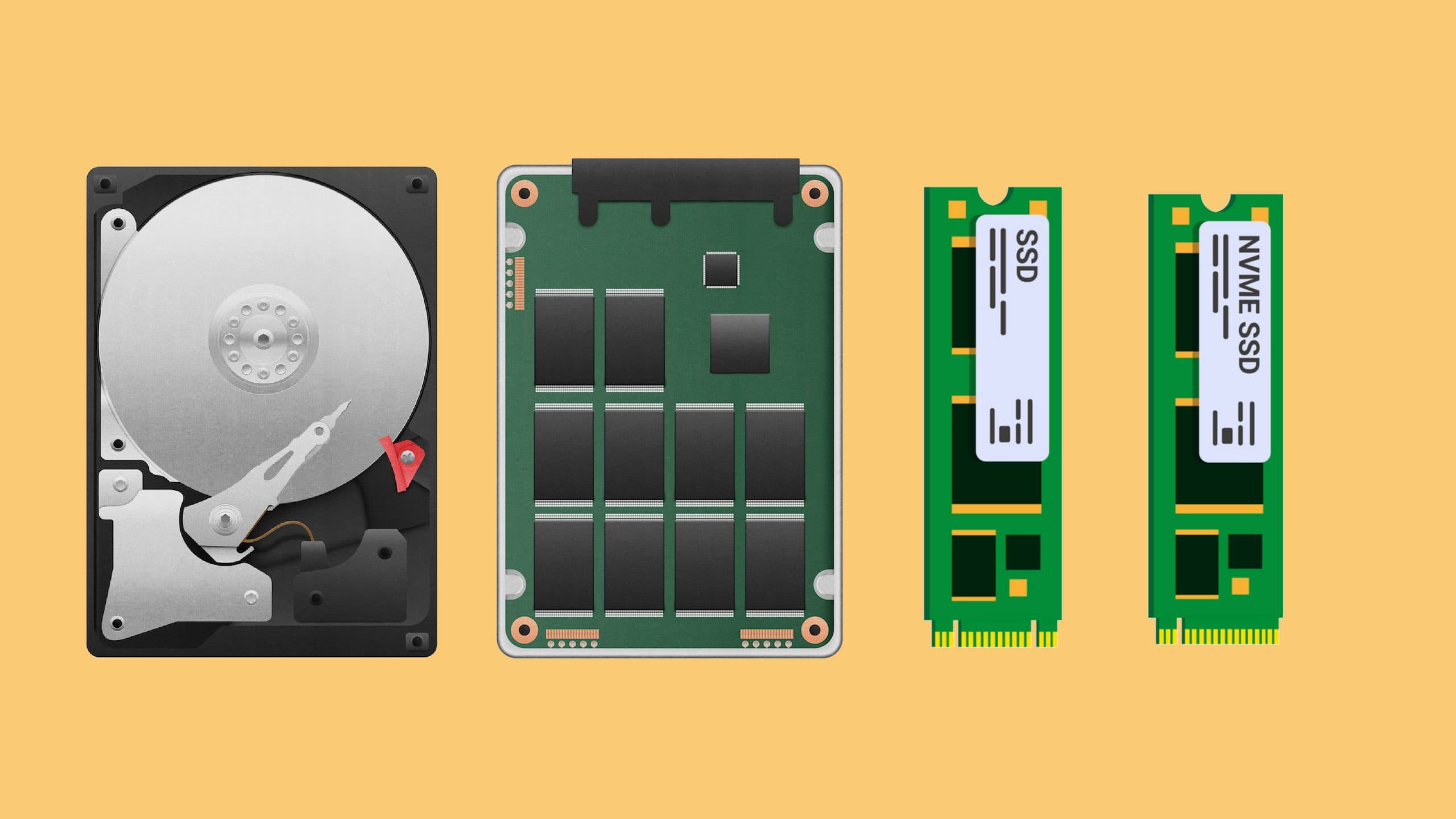

GPU Idle States: If your monitoring shows the GPU is idle, it suggests a data pipeline or batching issue upstream. The GPU is likely "starving" because the CPU or disk cannot fetch data fast enough.

Container Visibility: If a container cannot detect the GPU, it is likely missing the

--gpus allflag in the runtime command.Driver Failures: A common driver-version container failure shows the error "CUDA driver version is insufficient". This indicates the host driver is too old for the CUDA version inside the container.

Advanced Profiling: For detailed GPU kernel profiling, developers should use Nsight Compute to identify specific code-level bottlenecks.